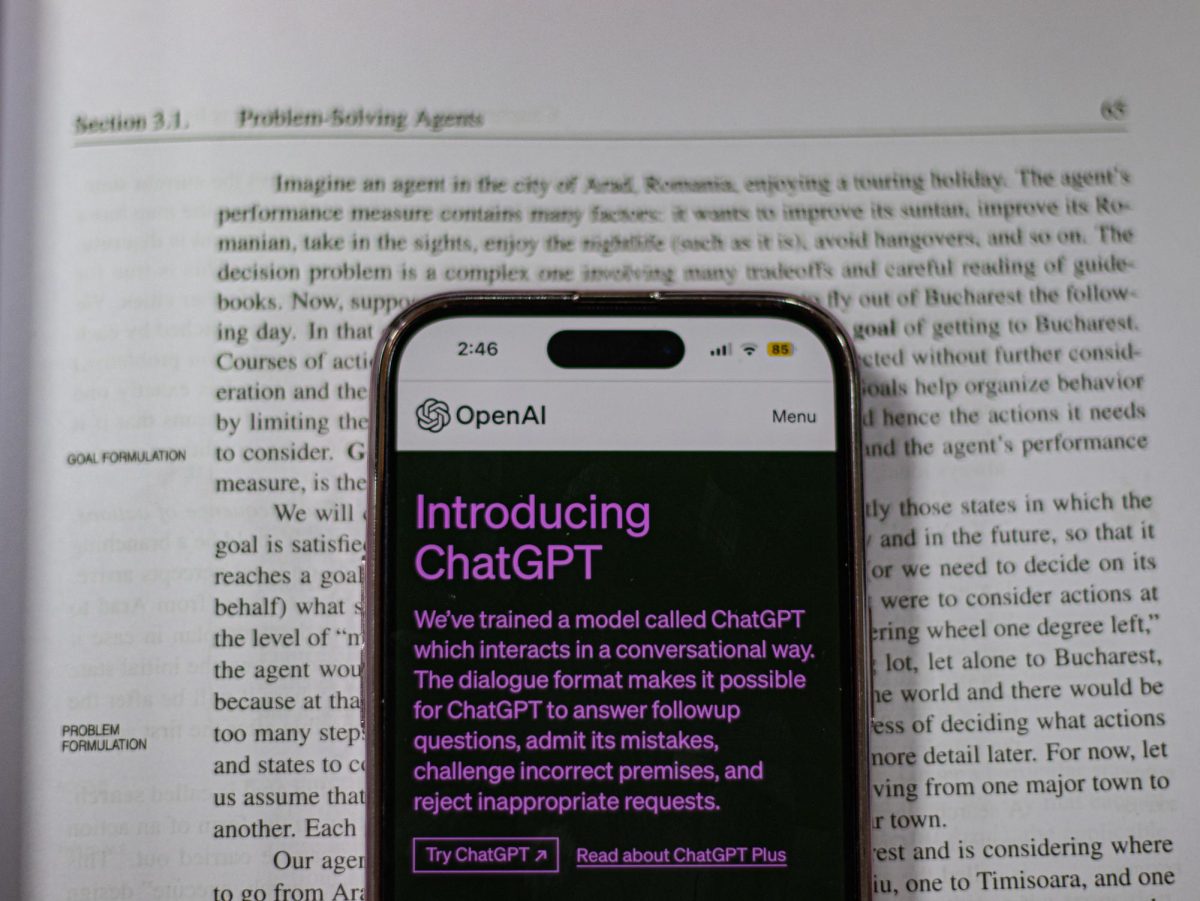

In the dynamic landscape of artificial intelligence, the efficiency and cost-effectiveness of AI models are driving a shift towards simplicity. Throughout the year, models like ChatGPT have evolved, taking a step towards more streamlined versions. However, this evolution is not necessarily a stride towards greater intelligence; rather, it’s a strategic compromise to manage server costs and optimize performance.

As the user base of AI models grows, companies are faced with the challenge of balancing functionality and expenses. To tackle this, a trend has emerged where AI models are gradually becoming more simplified, or as some may argue, “dumber.” Companies are monetizing this transition by offering these pared-down versions for free. This approach allows them to save on server space and, consequently, the associated costs of running resource-intensive, comprehensive models.

The rationale behind this shift lies in the understanding that once an AI model has gained a substantial amount of user interaction data, the need for the more resource-intensive “smarter” model diminishes. By offering a leaner version for free, companies can maintain user engagement while optimizing their infrastructure costs.

While users may notice a reduction in the complexity of responses, this trade-off emphasizes the economic realities of managing large-scale AI deployments. It’s a delicate balance between providing a valuable service and sustaining the infrastructure that powers it. As we navigate this landscape, users can anticipate continued advancements in AI, albeit with a growing awareness of the cost considerations that shape the evolution of these intelligent systems.